Problem

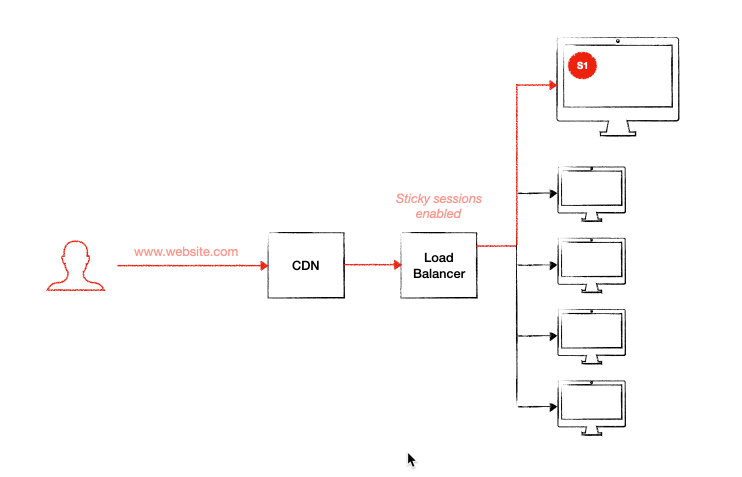

One of the websites we’re looking after is an e-Commerce website running on 5 web servers, load balanced in AWS with sticky sessions enabled, with the architecture shown above (simplified):

There’s several issues with running sticky sessions in an e-Commerce website. One of them is the inevitable customer losing his/her session when one web server becomes unavailable. This can occur when any of the following occurs:

- Web server is kicked out of the load balancer due to health

- Scaling / de-scaling happens

- Maintenance work is done on a web server (i.e. applying patches, etc)

Because the customer’s session is sticky to just one web server, the other web servers won’t know of this session. And so when the customer is redirected to another server for any of the reasons mentioned above, the customer’s experience may be impacted as the session is re-created.

So what’s the problem with sessions being re-created on the other web servers?

If your website is not reliant on sessions and that you’re not storing any information against the session that’s crucial to customer experience, you should be fine. Sessions can be re-created on other web server and your website will be just fine.

However, if you do have information being stored against the session, then all this information will be lost when the user is directed to the other web servers.

Inconsistent cart behaviour

This was the actual problem we experienced. The moment we disabled sticky sessions at the load balancer, the user’s cart becomes inconsistent. A customer adds a product to cart, views the checkout page and suddenly the newly added product is gone.

Cart behaviour in Episerver

Before we talk solutions, let’s understand first why the cart becomes inconsistent when sticky sessions are disabled.

Carts are not stored in the session

The first thing we need to understand is that carts in Episerver are not stored in the session. So even if you had a shared session state provider across all web servers, you may still experience this issue.

All the cart information are in the database, whether you’re logged in or not. If you’re logged in, the cart will be linked against the customer identifier. Otherwise, for those who are not logged in, the cart is created against the .NET AnonymousId cookie present in the user’s browser. This is why even if the user has not logged in, and revisits the site days later on the same browser, the cart information will remain.

Carts are stored in DB and cached in memory (in-proc)

Turns out that when carts are loaded from the database for the first time for a user (logged-in or not), the cart information gets stored in the object cache in-memory of that specific web server for the next 10 mins (at least for version Episerver Commerce 12). The cart information is cached against a key combination of all three:

- CustomerId/AnonymousId

- CartType (i.e. default or wishlist)

- MarketId

What this means is if a user was navigating the website with a cart across multiple web servers (sessions are not sticky), the web servers would each have this cart cached in-memory. The moment the user adds a product to cart (via web server 1, let’s say), the other web servers (2,3,4,…) will still have the cached version of the cart for the next 10 mins. So when the user gets directed to any of these servers for the next 10 mins, the user will see an incorrect (or outdated) cart. And this was our very issue.

Solution

There are a couple of ways to fix this.

Option 1 – Synchronise in-memory cache between web servers

Episerver has an built-in cache synchroniser that works as a notifier to other web servers to invalidate their cache for a particular object. This forces all other web servers to bust the cached version of the cart (or any type of information) if this has been changed.

This first fix requires ensuring remote events are configured between your frontend / web servers / web apps, that they can talk to each other.

- For Azure App Service web apps (self hosted) – follow this tutorial about creating an Azure Service bus and setting up publish/subscribe between web apps

- For AWS Elastic Beanstalk apps – you will have to configure publish/subscribe using SNS, by creating a topic and configure the elastic beanstalk apps to subscribe to this topic

- For web servers – ensure that remote events are set up between the web servers. Follow this tutorial if you have not set up one before.

Option 2 – Use a shared / centralised memory cache provider

You can use something like Redis where you not only share your memory cache, you can also use it a shared session state provider.

Putting the extra infra cost aside, I can see this option being the preferred option:

- Performance and reliability

- No need to manually sync in-memory caches

- Stability – with option 1 above, if the TCP service fails on one of the web servers the cache synchroniser will be crippled.

Conclusion

If you are deciding to disable sticky sessions on a load-balanced environment, you need to ensure the session states and in-memory caching are either synchronised between the web servers that are load-balanced, or that they are shared across by using providers like Redis. This ensures that your customer’s experience are not impacted as they are directed from one web server to another.

Hope this helps anyone and saves them hours of investigating inconsistent carts!